What Educators Need to Know About FERPA, Public Records, and AI Privacy

Here’s what teachers should understand about AI chat logs, student data, public records laws, and the risks of assuming digital conversations are private.

TL;DR — Key Ideas

AI chat logs may be discoverable in legal proceedings if they involve public business, student issues, HR matters, or communications conducted on district-managed devices or accounts.

FERPA does not outright ban AI use, but educators should avoid entering personally identifiable student information into unapproved AI platforms.

Deleted chats are not always truly deleted. AI platforms vary widely in how they store, retain, and archive conversations.

Consumer AI tools and district-approved enterprise AI systems are not the same. Privacy protections, retention policies, and legal safeguards can differ dramatically.

California educators should pay particular attention to public records laws. Communications related to school business may qualify as public records even on personal devices.

The safest rule remains simple: Never write anything electronically that you would not want quoted publicly in the future.

About 12 years ago, I received a call on my work phone from an attorney for one of the major airlines. He told me that my email records were going to be subpoenaed as part of a lawsuit a former consultant had filed against the airline.

My first reaction was confusion. My second reaction was to download and save in a Word document every email to or from that consultant for the course of his contract work. I was able to do that because I have a longstanding habit of saving every email. I do this under the operating assumption that some day, for some reason, I might need to turn over my emails to a court.

Why would any educator care about a legal tussle? Well, earlier this week I saw a one-line notice in an AI-newsletter that mentioned the fact that chat logs may be subject to subpoena. If you ever ask a chatbot (Gemini, ChatGPT, Copilot, Claude, etc.) for advice on how to deal with a problematic coworker, administrator, parent, or student, there is a chance that one day those chat logs could become part of a public court record.

In California this is an issue of particular concern to educators. Following the California Supreme Court’s ruling in City of San Jose v. Superior Court, any communication related to the conduct of public business is a public record. While a subpoena is a court order for evidence in a lawsuit, a California Public Records Act request can be made by any member of the public (including parents or journalists) for any reason.

The broader lesson is that users should not assume AI conversations are inherently private simply because they feel conversational or ephemeral.

In this blog I’ll explore what teachers should know and do to keep themselves, as much as possible, on legal safe ground. Forewarned is forearmed.

AI Chats May Be Treated Like Other Digital Records

Courts have long held that emails, texts, and internal messages can be subpoenaed. AI chats are increasingly being viewed the same way — especially if they are stored by a school district or tied to a teacher account.

In practice, keep these ideas in mind:

Conversations with AI tools (lesson planning, student scenarios, emails drafted, etc.) may not be private in a legal sense.

If a dispute arises (parent complaint, special education case, HR issue), those records could potentially be requested.

As you can imagine, student information is the biggest risk area. The legal sensitivity increases significantly if chats include personally identifiable student information (PII).

This intersects with laws like FERPA in the U.S., which prohibit educators from entering student names, IDs, or detailed situations into AI tools that create records outside school-controlled systems.

Educators must keep in mind that “private” or “deleted” doesn’t guarantee protection. Even if you delete a conversation, or the platform claims chats are not used for training, it does not automatically mean the data is gone or inaccessible in a legal process.

AI companies are generally not required to preserve every chat indefinitely. Instead, they face overlapping duties that come from data protection laws, their own retention policies, and case‑specific court orders that can require preservation and production of chat logs as evidence.

In practice, this means a provider may keep chat records for operational reasons under its terms of service. If a lawsuit or government investigation arises, a judge can order a company to preserve relevant chat logs for litigation or investigation.

School districts are struggling to keep pace with rapid changes in AI technology, vendor policies, and evolving legal guidance. Many districts do not yet have clear AI usage policies and haven’t clarified data retention or legal exposure. Until policies catch up, caution is warranted.

The education law firm Atkinson, Andelson, Loya, Ruud & Romo advises school leaders that using generative AI and transcription tools in confidential meetings raises significant student‑privacy and records‑disclosure risks, and recommends tight controls over what is recorded or sent to third‑party AI platforms.

The Legal Gray Zone

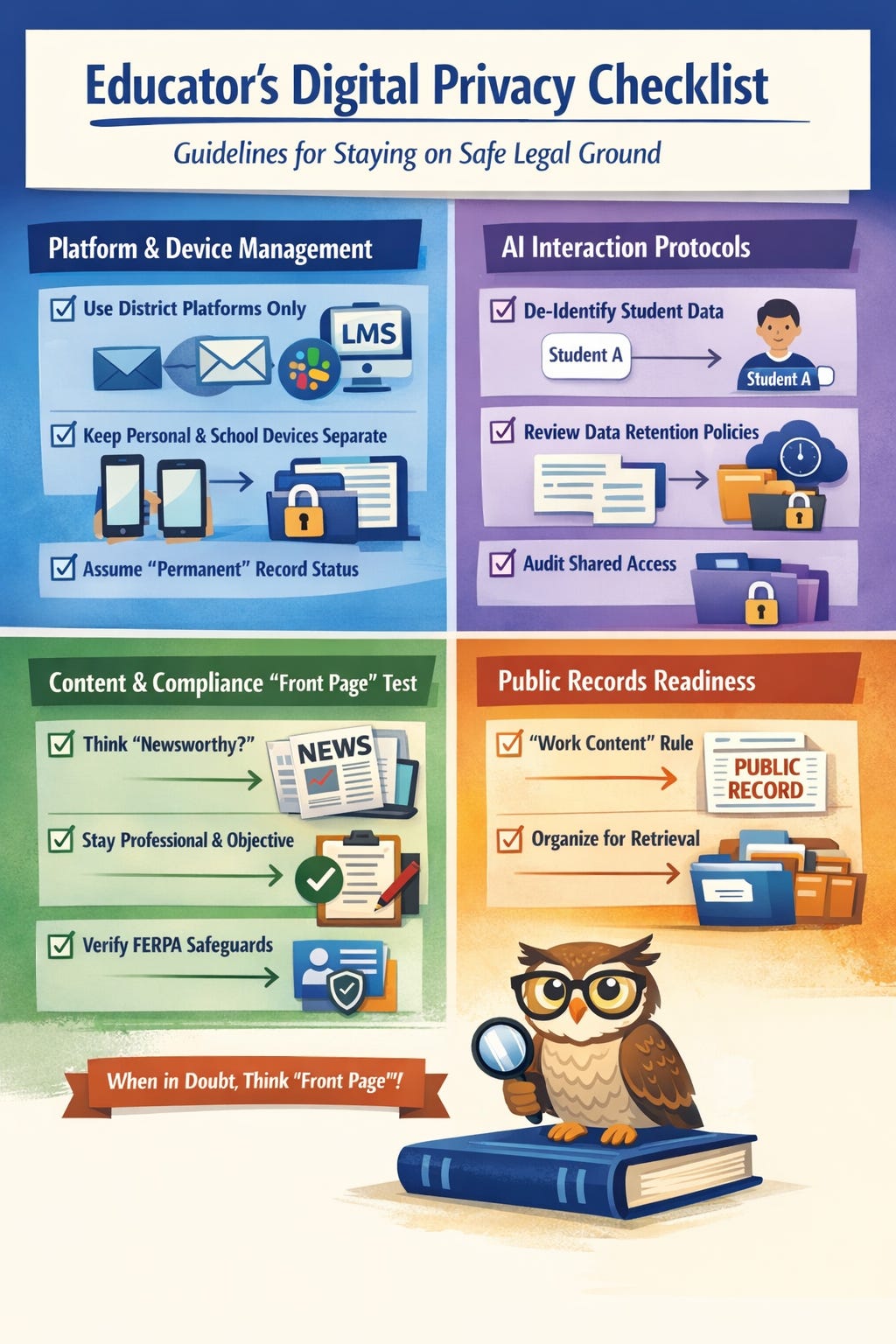

That policy lag leaves many teachers making judgment calls without clear institutional guidance. To make the issue more practical, I asked several AI systems to help generate a working draft of what an educator-focused digital privacy checklist might include.”

As you read these suggestions keep in mind that neither I nor the AIs I consulted should be viewed as the final authority. Think of these guidelines as rules of thumb rather than unassailable legal advice.

1. Platform & Device Management

[ ] Use Dedicated Channels for Public Business: Conduct all school-related discussions (grading, behavior, policy) on district-sanctioned platforms (email, LMS, or district Slack).

[ ] Segregate Personal Devices: Avoid using personal SMS or private messaging apps for “quick updates” about students. If a message involves a student, it belongs in the official record.

[ ] Assume “Permanent” Record Status: Treat every digital interaction — even those with “auto-delete” or “disappearing” features — as a permanent entry in a potential legal discovery.

2. AI Interaction Protocols

[ ] De-identify Student Data: When using generative AI for drafting IEP goals or feedback, use placeholders (e.g., “Student A” or “Learner 1”) instead of real names or unique identifiers.

[ ] Review Data Retention Policies: Be aware of how long your AI tool stores “deleted” history. Many enterprise accounts have different retention rules than consumer accounts. Note: This is what Google says about their education accounts: “In an “Enterprise” environment like a school district, the administrator — not the individual teacher — often has the final say over how long data actually exists on Google’s servers.”

[ ] Audit Shared Workspace Access: If using collaborative AI environments (like shared Gemini folders), ensure only authorized personnel have access to prevent accidental data leaks.

3. Content & Compliance (The “Front Page” Test)

[ ] Apply the Professionalism Filter: Before hitting send, ask: “Would I be comfortable with a parent, a journalist, or a judge reading this aloud in two years?”

[ ] Avoid Subjective Commentary: Keep digital notes on student behavior objective and clinical. Avoid venting or using informal jargon that could be misinterpreted in a subpoena.

[ ] Verify FERPA Guardrails: Ensure that any chat log containing Personally Identifiable Information (PII) is handled with the same security as a physical student file.

4. Public Records (CPRA) Readiness

[ ] Understand the “Work Content” Rule: Remember that in California, the content of the message (conduct of public business) makes it a public record, regardless of whether it’s on a personal or work phone.

[ ] Organize for Retrieval: Keep your professional communications organized. If a Public Records Act request is filed, being able to quickly locate relevant logs shows due diligence and minimizes the scope of a broader “device search.”

I can boil these down to the one communication rule I have followed since 1992, when I became one of the first teachers in my state to get an email account via the California Technology Assistance Project: Never write anything in an electronic format that you wouldn’t be comfortable seeing on the front page of your local newspaper.

Final Thoughts

The pace of AI adoption in schools has far exceeded the pace of policy development. Until districts, states, and courts provide clearer guidance, educators should assume that AI interactions involving school business may eventually be reviewed by administrators, attorneys, parents, or journalists.

That does not mean teachers should avoid AI tools. As a result, they should approach them with the same professionalism and caution they would apply to email, student records, or internal memos.

The safest rule remains the oldest one: Never write anything electronically that you would not be comfortable seeing quoted publicly later.

In the age of generative AI, that advice matters more than ever.

This work is licensed under a Creative Commons Attribution 4.0 International License (CC BY 4.0). You are free to share and adapt this material with attribution.